Are AI Agents Benefiting Businesses?

On December 9th 2025, Anthropic, the creators of Claude, released a report talking about the state of the industry regarding AI Agents.

The goal of the report was to identify how AI is currently being adopted and what issues companies were facing when trying to adopt it. With the help of the research firm, Material, Anthropic surveyed over 500 technical leaders in the US across a variety of-sized companies.

Questions ranging from adoption, to AI sentiment, to barriers preventing AI from being used more, were asked to all these participants.

The Current Landscape

AI agents are being deployed in a variety of different industries, with most leaders saying that it is bringing benefits today.

The industry being impacted the most is software development. Thanks to the vast amount of code all over the internet, the documentation created by the language's creators, and the ability to verify whether something is right or wrong, it makes sense why LLMs are good at programming.

How Many Organisations Are Using AI Agents, How Complex Are Their Tasks, and How Much Autonomy Do They Have?

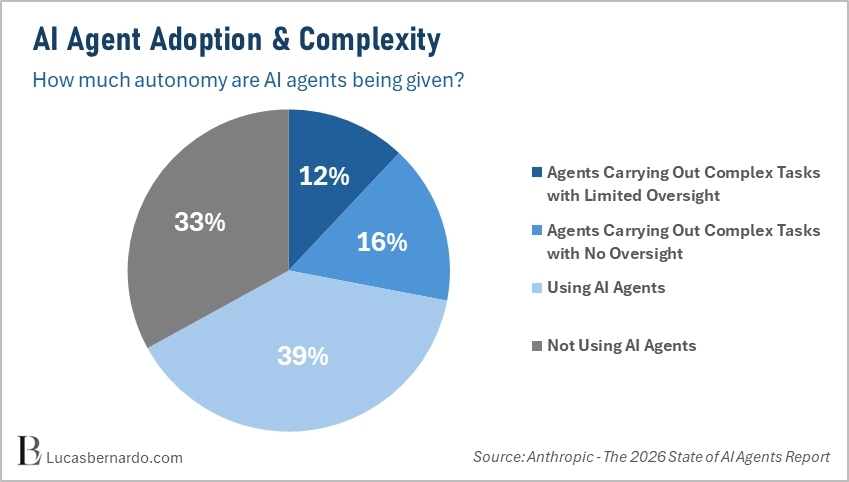

At the end of 2025, 57% of leaders had deployed multi-step AI agents. These agents weren’t just your simple chatbots that did one useful task; instead, they carried out multiple step-by-step tasks, with 28% of participants giving them almost complete autonomy.

Only 33% of participants interviewed used no AI agents in their current workflows.

Case Study: Adoption of Coding Agents

It comes as no surprise that coding is one of the best things AI agents can do. With the vast amount of data available online and rich documentation, it makes sense that LLMs are good at creating code. Data is important, but clean data is even more so.

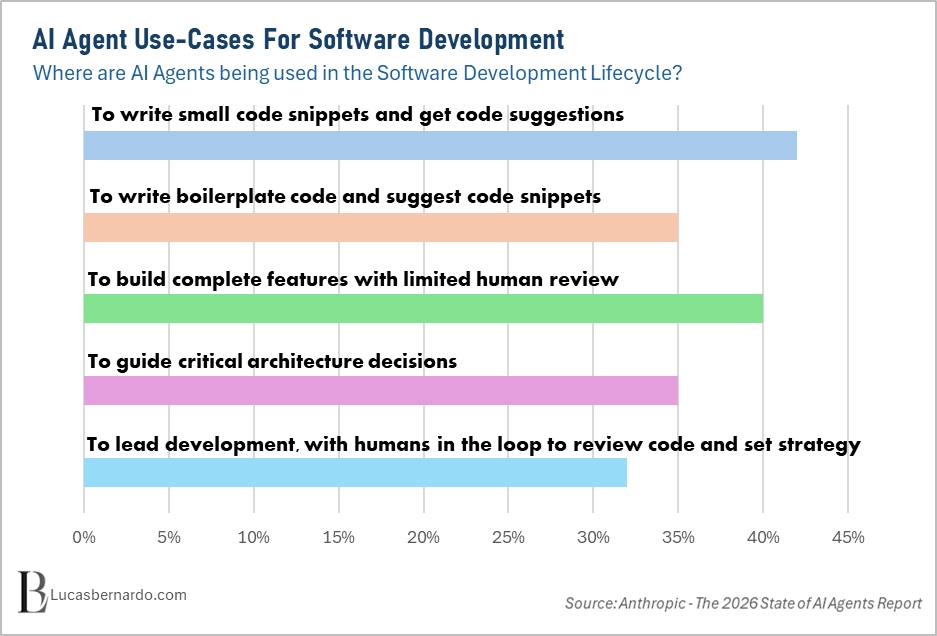

86% of leaders are using coding agents at some point in the Software Development Lifecycle (SDLC). This number should come as no surprise; most people already know how good AI is at coding, and since December 2025, there has been a shift in tone. Whereas before, people would berate AI as not being good enough, now people are shocked by just how good AI is getting at developing code. I mean, one of the biggest success stories so far is OpenClaw.

I can only imagine the adoption of coding agents being higher by the end of this year. Claude Opus 4.6 and GPT 5.4 are crushing the software development benchmarks, and I would not be surprised if the scores only get better and better.

Although overall adoption is high, only about 2 in 5 leaders use the full capabilities AI provides.

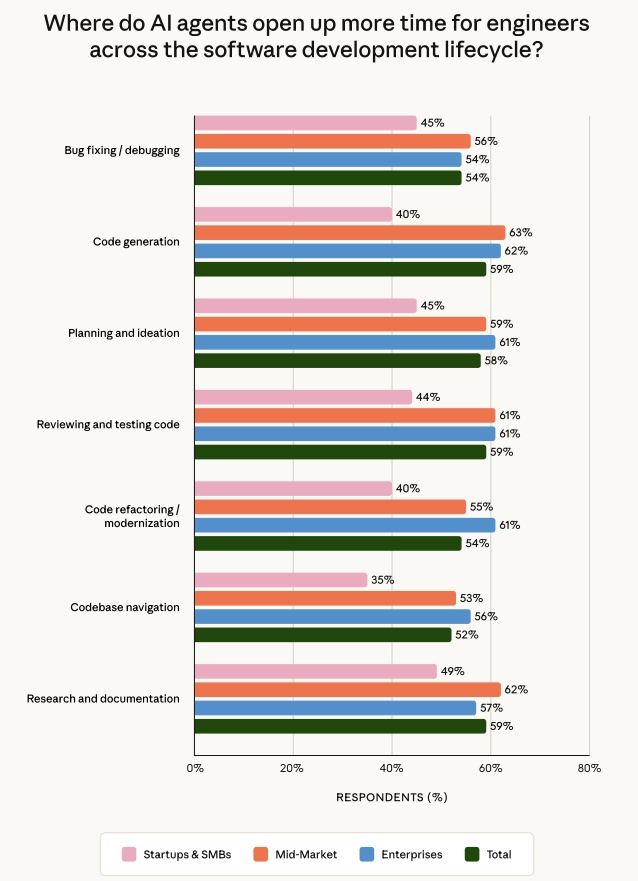

Those who do use AI coding agents claim that productivity is boosted, with more than half of users claiming this.

Other Than Coding, What is AI Good At?

AI agents have more benefits than their ability to generate code. In fact, the second most popular use of AI Agents is for research and reporting, with that sitting at 56%. No surprise here, AI is trained on a wide range of data sources, and if you can fine-tune it so that it can correctly fetch the necessary documents to succeed in its research task, LLMs are perfectly suited for it.

We also see a rise in areas such as Supply chain optimisation, Product development, and Financial planning and analysis.

All these emerging markets make sense. LLMs are brilliant at looking through sources of information and analysing them. That’s exactly what these markets require, and therefore, AI agents can significantly help with them.

Is AI Actually Producing Results?

According to the report, 80% of respondents believe that AI is producing measurable benefits to their organisations.

Even more positively outlooking, 88% of organisations foresee that in the next 12 months, there will be more positive financial impacts for the business.

What Does The Future Hold?

According to 96% of the respondents, the future is optimistic. They are expectant about the role that AI agents will have in business and believe that AI will continue to improve and play a bigger role.

However, some problems need to be addressed so that this vision can be achieved.

- Integration with existing systems must be improved (46%).

- The ability to access high-quality data must also see improvements (42%).

- Cost of implementation must decrease (43%).

- Security & Compliance concerns must be addressed (40%).

What does this tell us?

In order for AI agents to garner more adoption, AI must become easier to use, cheaper, and more trustworthy. If companies do not address these issues, they will not increase the number of customers.

In fact, whilst frontier labs are worried about making AI more powerful, businesses care more about its usability. You could argue that an AI's intelligence makes it more usable, but you could also argue that it is already good enough, and instead of sprinting towards innovation, maybe we should sprint towards usability.

But the cost of doing so could be AGI. After all, as described by most people, AGI would be able to tackle all the problems we would have (as long as a human could solve them), and that would include usability ones too. This is a risk no frontier lab is willing to take.

What Do Organisations Want From AI?

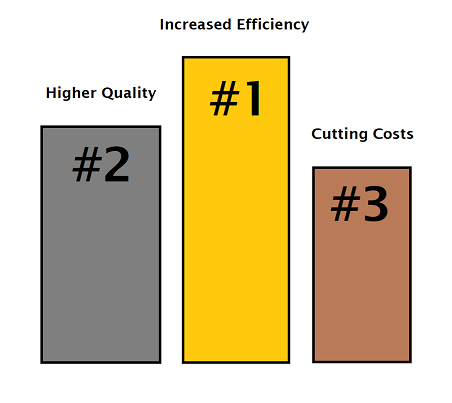

In first place, we have increased efficiency, sitting at 44%. No shock in that statement, companies want AI to bolster the speed at which their employees can complete tasks.

37% of Organisations also want higher quality outputs from their AI agents. This is probably because they want their AI agents to produce more reliable answers and not to feel like AI slop when reading through the generated work. This too makes sense. Not only does it improve the producers' lives, but it also improves the consumers' lives.

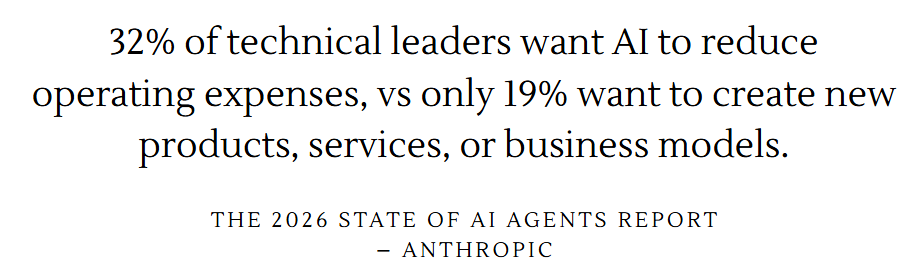

The data also uncovers a hypocrisy that frontier AI labs have about unemployment related to AI.

A lot of labs are claiming that it doesn’t make much sense to replace humans with AI; instead, it makes more sense to hire more people so that they can make use of AI, and therefore have more work be done. CEOs are also pushing this thought process, such as Dara Khosrowshahi, the CEO of Uber.

The data simply does not show that.

Personally, I find it weird that only 44% of technical leaders want increased efficiency, understanding why would be nice to know.

I wonder whether the respondents not asking for the above improvements are satisfied with what they already have, or whether they simply don’t believe AI can achieve the improvements and therefore do not ask for it.

Concerns About The Study

Overall, the report is very interesting; however, I must raise some red flags that should have been addressed to make the report more verifiably trustworthy.

- What is the definition of an AI Agent?

The term AI agent is still relatively new, and just as AGI has a moving goal-post for a definition, AI agent is not too dissimilar. Was the definition provided before the questions were answered, or did the report assume that the leaders knew what an AI agent was? If the definition was never defined, the study could be inaccurate; however, if it were defined, clarifying it in the report would add more credibility. - How did Anthropic select the participants for the study?

I would assume Material were the one to have found the participants for the report; however, I would like to know what the process was for deciding which people to interview. Was it hand-picked from Anthropics customers? Did Material randomly select technical leaders? Did they use customers that they had already worked with before? Understanding how the participants were selected allows us to verify if there was any bias and to understand the spread of industries. - Are there multiple participants for the same organisation?

Something tells me this might be the case, as organisations and participants are not used interchangeably. This isn't great for its reliability. If you have two people from the same company, then that would lead to the responses being doubled (for example, in the case of AI agent adoption). - How do we measure efficiency and productivity?

Unfortunately, I guess that efficiency and productivity are measured subjectively rather than objectively. You might think you are doing more work, but that might not always be true. Our brains tend to be good at lying to us. That's why these measurements should be done in an objective way. For example, one measure could be revenue per capita.

Authentically Written By Lucas Bernardo