Claude Mythos - Everything You Need To Know About Claude's Most Powerful LLM

Claude Mythos, currently the most powerful model created by Anthropic, has been officially announced.

For those unaware, Anthropic is one of the leading frontier labs in the AI Arms Race, created by former OpenAI employees who disliked its leadership and wanted to focus primarily on AI safety. Their decision to withhold Claude Mythos seems to reinforce their guiding principles.

Originally leaked in late March after security researchers found their misconfigured CMS, the documents revealed that Anthropic was looking at releasing an even more powerful model, surpassing its current frontier model, Claude Opus.

In this leak, Mythos was described as having superior reasoning and cyber skills compared to its predecessor. In fact, it was so good that Anthropic was planning to restrict its release to ensure it could be used to benefit the world of security rather than at its cost.

On April 7, 2026, Claude Mythos was officially announced, alongside a project to make the world of software a safer place – Project Glasswing.

How Good is Claude Mythos?

Anthropic already has arguably the strongest LLM for coding, with Claude Opus 4.6 being the best model for the task.

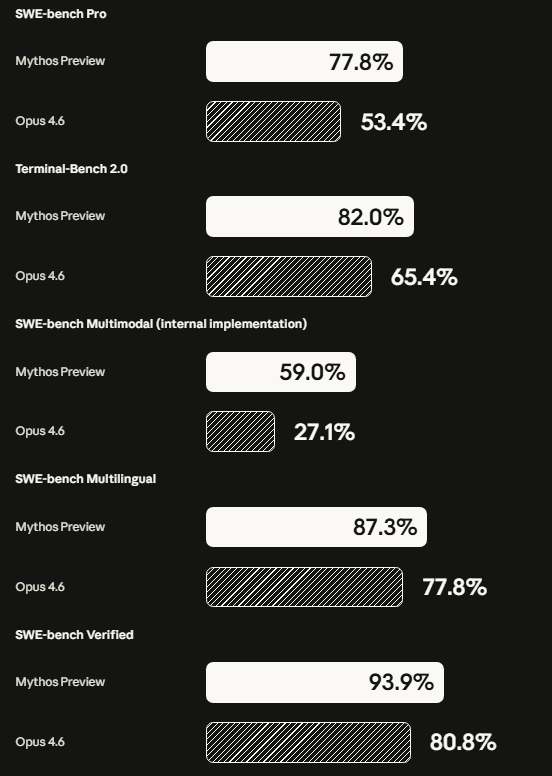

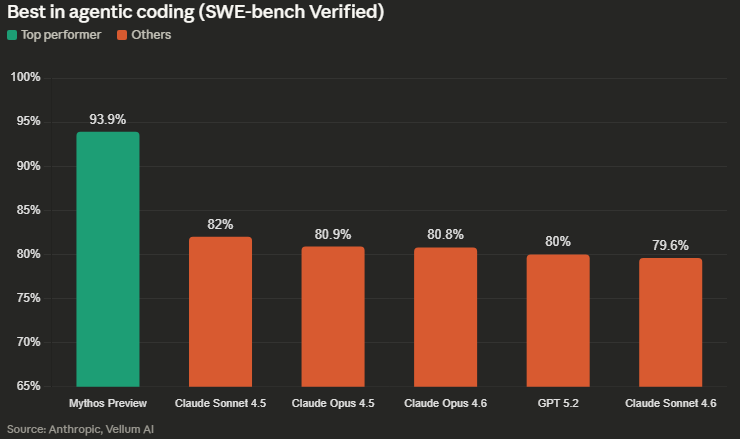

Anthropic has released benchmark results based on SWE benchmarks, and the improvements seem to range from 10% to 50%.

Not only do these results beat Anthropic's previous models, but they shatter all other competitors in the field.

If what Anthropic says is true, this could be a massive game-changer for software development, even after the programming revolution in December 2025.

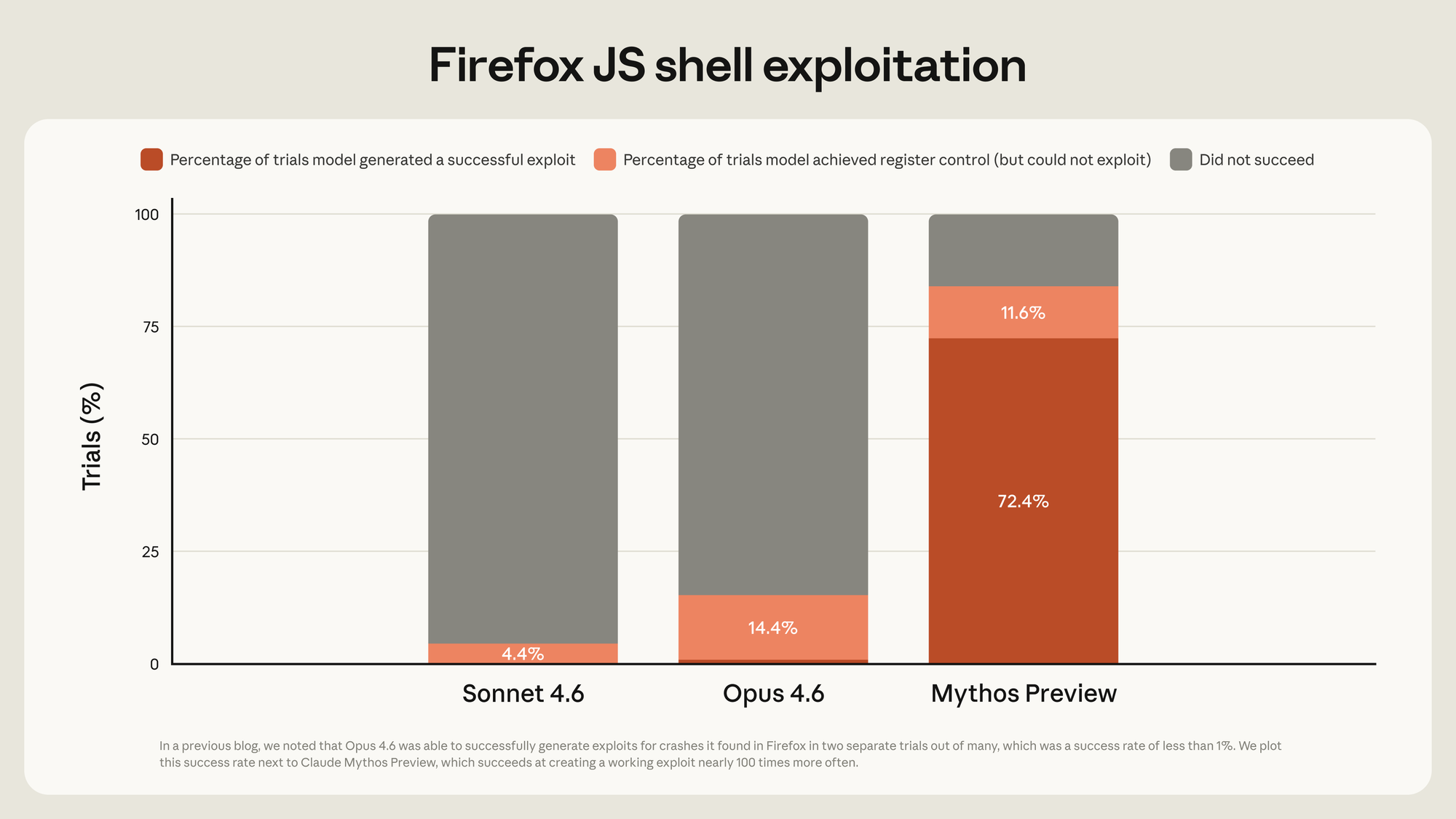

Claude Mythos has already found some vulnerabilities, including ones decades old, such as the 27-year-old bug in OpenBSD (an operating system known for its security).

Mythos also seems capable of chaining multiple vulnerabilities together and carrying out multiple MITRE attack paths, as described in one instance:

"Mythos Preview wrote a web browser exploit that chained together four vulnerabilities, writing a complex JIT heap spray that escaped both renderer and OS sandboxes. It autonomously obtained local privilege escalation exploits on Linux and other operating systems by exploiting subtle race conditions and KASLR-bypasses. And it autonomously wrote a remote code execution exploit on FreeBSD’s NFS server that granted full root access to unauthenticated users by splitting a 20-gadget ROP chain over multiple packets."

Mythos even allowed non-security experts to identify vulnerabilities that could be exploited instantly.

I'm only scratching the surface here, with thousands more critical and high vulnerabilities being found by Claude Mythos. More details can be found in their red-team blog.

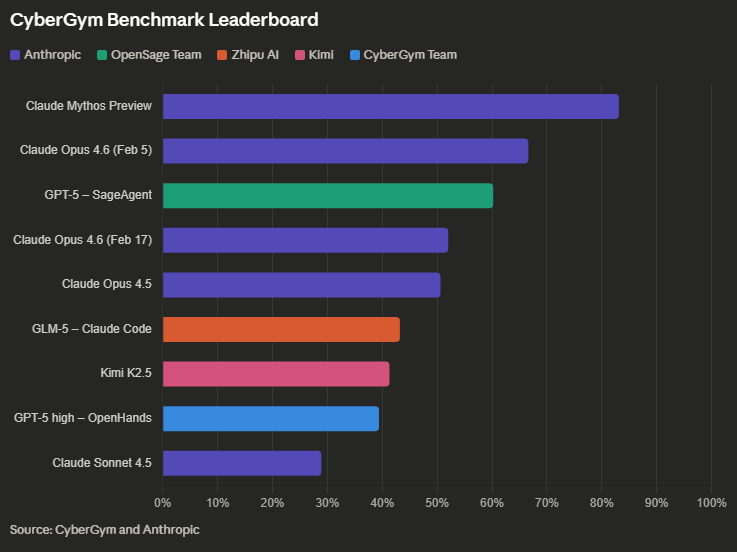

Lastly, CyberGym, a benchmark evaluating an AI agent's real-world cybersecurity capabilities, has been tested using Claude Mythos. Below are the results highlighting just how powerful this new LLM is.

What is Project Glasswing?

Project Glasswing is the first initiative of its kind, aiming to give the "good guys" a chance to better protect themselves from cybersecurity attacks before Mythos is released into the wider world.

Essentially, it brings together some of the biggest companies in the world and allows them to use Claude Mythos first to scan their software for vulnerabilities.

In fact, all Cloud Service Providers (CSPs) are allowing their customers to access private previews, which is likely a heavily vetted process, to ensure the LLM does not fall into the hands of the wrong people.

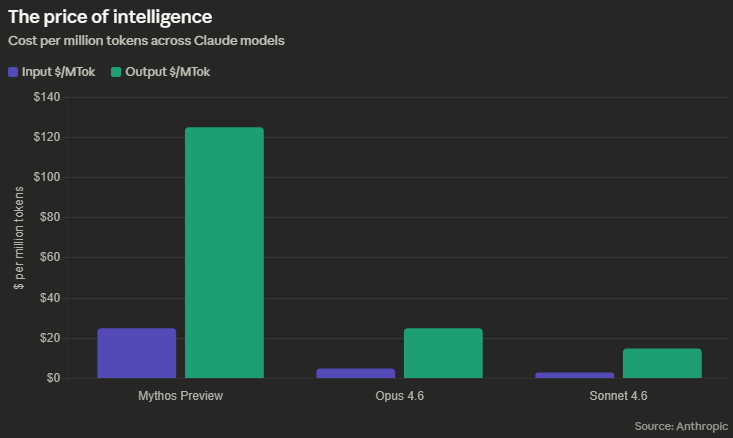

Once Phase 1 of Project Glasswing is finished, Claude Mythos will be available to participants, though at a steep cost.

When it becomes widely available is still unclear; however, hopefully, it will give the above 12 organisations plenty of time to fix all their vulnerabilities.

Anthropic has also committed millions of dollars in tokens to open-source foundations as part of Project Glasswing.

Project Glasswing is also available for attack and defence operations for the US government.

Lastly, in 90 days, Anthropic will collect and produce a report detailing everything that was discovered during this project. The scope seems to be for quite a few months, so it will be interesting to see what Anthropic and its partners are able to find.

What Does This Mean For AI?

This release may have set a precedent for AI safety. It now looks reckless for AI frontier labs to release powerful LLMs out of nowhere, without giving organisations the ability to protect themselves first. In fact, if Sam Altman, Elon Musk, or Demis Hassabis were to release a model this powerful, they would be ostracised for being unsafe.

This may have even slowed down the pace at which new AI is released, creating a natural slowdown in the AI arms race, which could be a good thing.

Honestly, this seems like a great idea by Dario Amodei and his team. Gatekeeping Claude Mythos shows that Dario is serious about deploying AI safely.

Ultimately, the success of this will have to be monitored. Whether the success of this changes once Claude Mythos is released publicly is a completely different story.

What Does This Mean For Cybersecurity?

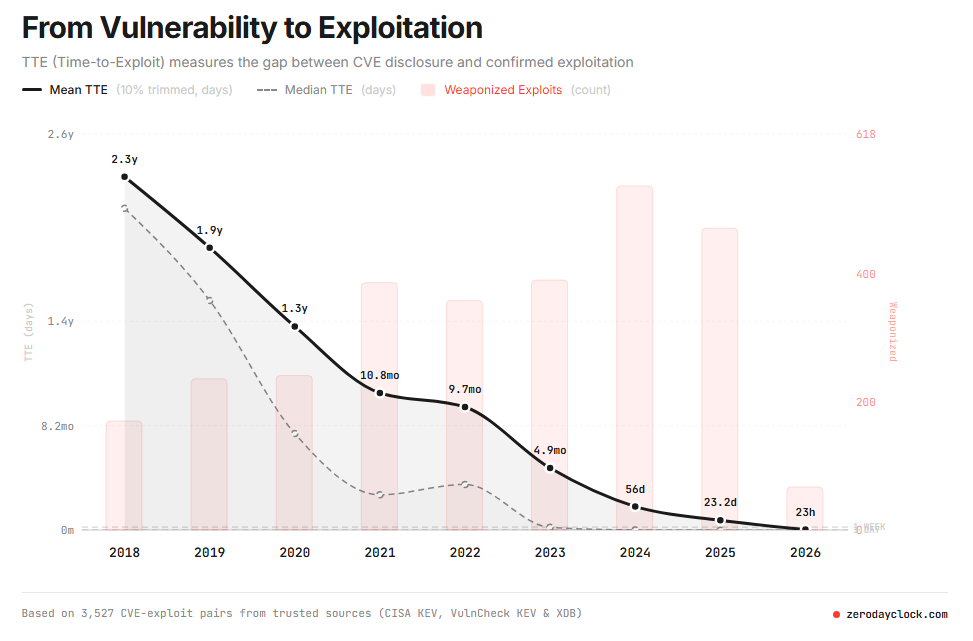

One thing is clear: AI is increasing the speed at which vulnerabilities are being exploited, and also increasing the number of vulnerabilities being found.

With that in mind, Claude Mythos could be a massive game-changer in the cybersecurity world.

The fact that defenders will have the first-mover advantage for this technology is seriously beneficial and means that, for the first time, we may see defenders having the high ground.

Whether this stays the case once Claude Mythos is widely available is a different story.

This calls into question the valuation of certain cybersecurity companies, such as those that deal specifically with software vulnerabilities, and even those that offer penetration tests. Will they use this new tool as a few of improving their services and making it more affordable (which is desperately needed for SME's) or will they begin to cut costs? Will they even be needed at all?! Yes, probably.

What Does This Mean For Anthropic?

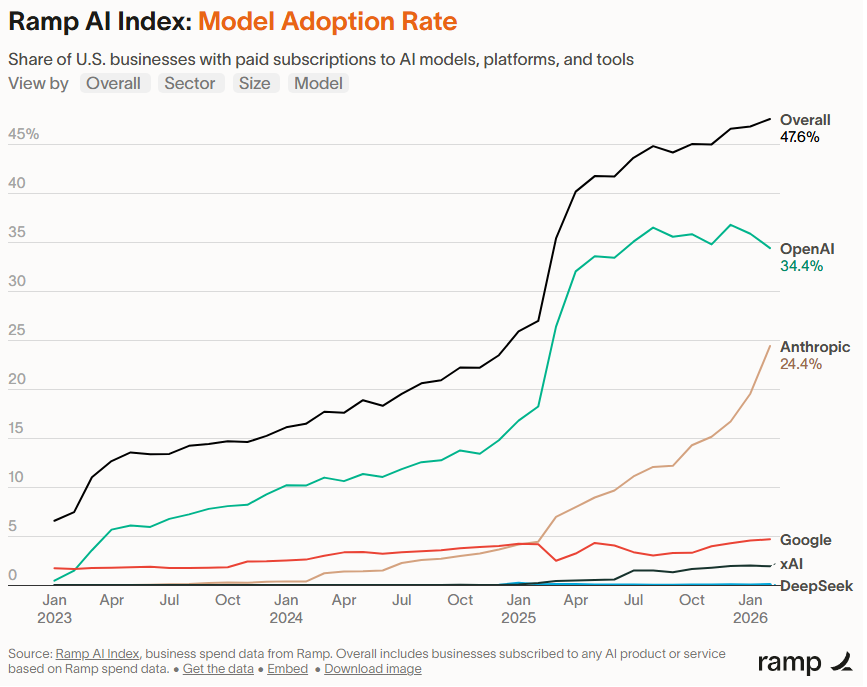

If you ask anyone using AI, they will tell you Anthropic is currently outputting so much innovation and releases, it's hard to keep up with what they are doing. They are currently making massive moves in the AI world.

For the first time, Anthropic has had its first mover advantage. Even Claude Code, by far their most popular product, wasn't the first coding CLI in the market, although it did become the most popular.

Furthermore, Anthropic is really digging its heels into the sand and making itself the go-to LLM for coding.

I wouldn't be surprised to see this boost Anthropics revenue and even adoption. It's already growing at quite a fast pace, after the DOW incident in early March. Anthropics revenue is already increasing exponentially, with it being $9 billion in late 2025, $14 billion in early February and is currently $30 billion as of early April. It has even overtaken OpenAI in run-rate revenue, even whilst it holds a much smaller market share compared to its competitor.

Will this solidify Anthropic as the "safe" AI company, or will this be quickly forgotten about in a time where it seems like humans have a memory of just a few days?

Nonetheless, this has definitely improved their reputation, even whilst it was already on the rise. Will Anthropic keep up this pace? I guess we will find out.

Authentically written by Lucas Bernardo.